Artificial Intelligence (AI) is no longer just a future idea; it has become a key business priority. It is quickly changing industries and opening up new ways to improve customer support, help employees work faster, automate routine processes, and make better use of data.

Many companies are increasing spending too: 74% of organizations plan to raise their AI budgets in 2025, and 92% expect to increase investment over the next three years. Still, even with all this momentum, moving from “we want AI” to “AI is working in our business” is often difficult. The real question is not if AI can change a business, but how to bring it in without wasting time and money.

This article explains the most common problems organizations face when putting AI into practice, plus practical ways to deal with them so projects move past proof-of-concept and deliver real, long-lasting value. If you want expert help with this process, using Addepto AI expertise can be a key step in turning a plan into working results.

What Are the Most Common Challenges in AI Implementation?

Starting with AI can feel confusing, with many ways to go wrong. AI can bring big benefits, but the day-to-day work of putting it into place reveals technical, operational, and people-related problems. Knowing these common blockers is the first step to removing them and building a smoother path to adoption.

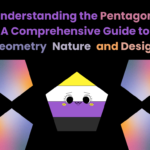

Data Quality Issues and Bias Risks

Data is the main input for AI. If the data is wrong or messy, AI models will perform poorly and produce weak insights. In an IBM Institute of Business Value report, 45% of respondents pointed to data accuracy and possible bias as major concerns. Data that is inconsistent, incomplete, or incorrect can break an AI project early, leading to unstable results. For example, if different systems store the same information in different formats or units, the model will learn mixed signals and produce unreliable predictions.

Bias in training data is also a serious ethical and business risk. If the data reflects past unfairness, the AI will often repeat it. This can create unfair outcomes, such as hiring tools that favor certain groups or loan models that reject qualified applicants. Fixing data quality and bias is not just a technical task-it is a basic requirement for fair and dependable AI.

Insufficient or Fragmented Data Availability

Even with clean data, many organizations do not have enough of their own data to adapt AI to their needs. About 42% of respondents said they did not have enough proprietary data, which is needed to build more specific solutions and gain an advantage over competitors. Without enough data, it becomes harder to build deep learning models, run them reliably in production, and improve them over time using methods like MLOps.

On top of that, data is often split across departments and older systems. This makes it hard to pull everything together in a single format that works for AI training. When data stays in silos, AI projects often end up with limited insights and limited impact. Without a clear plan for collecting and sharing relevant internal data, AI efforts may stay generic and fail to reach their full value.

AI Skills Shortage and Lack of Expertise

Companies need people who know how to build and run AI systems, but demand is growing faster than supply. Around 42% of organizations say the lack of talent and specialized in-house knowledge is a major blocker. This gap is not only about hiring data scientists. Companies also need people who can lead AI programs, fix difficult problems, remove blockers, and spread good practices across teams.

Many businesses focus on hiring outside experts but do not train their current staff. This can create a “two-level workforce,” where a small group understands AI and the rest does not. When employees lack knowledge and hands-on experience, they may struggle to use AI tools well. That can cause confusion, lower adoption, and reduce the return from AI spending.

Integration Difficulties with Existing Systems

Adding AI to current IT systems is often hard because many older systems were not made for AI. Legacy software may lack the computing power, flexibility, and compatible data formats needed for smooth integration. This is a common issue in organizations where older systems run key operations.

If the infrastructure cannot process large amounts of data quickly, AI work slows down. Running AI workflows on older enterprise storage can lead to slow performance, random failures, and network or storage problems as more users rely on the system. Without planning and investment in the right infrastructure, businesses risk disruptions and a mismatch between modern AI needs and older technology.

Scalability and Production Barriers

One common problem is the “proof-of-concept (PoC) trap.” Even when supported by PoC development services, a pilot may look promising but never grow into full production use. This often happens because the project is built in a silo, or because the team focuses more on the technology than on the business problem.

AI often needs large amounts of data to build deep learning models, use them in production, and keep improving them. At production scale, AI systems may need to handle huge numbers of small files and very large datasets for training, real-time processing, and archiving. Standard enterprise storage often cannot handle this well. Bottlenecks might only appear once the system faces real production workloads, leading to slowdowns, weak inference performance, and projects that fail to deliver the expected ROI.

Funding Limitations and Unclear ROI

The cost to build, maintain, and support AI tools is a major concern, with 50% calling it their biggest worry-especially during uncertain economic periods. Even if AI looks promising long term, short-term results can be hard to predict. Also, 42% of respondents cite “inadequate financial justification or business case” as a major challenge.

A good AI business case needs clear links to outcomes, such as faster work, new revenue, or lower risk. If use cases do not show clear benefits (like reduced labor costs, faster product releases, or better customer engagement), it is harder to get funding. AI projects can also strain budgets due to unexpected data issues, hiring costs, energy use, and longer-than-planned timelines.

Security, Privacy, and Confidentiality Concerns

As AI becomes part of daily operations, it also becomes a target for attacks. Around 40% of respondents say data privacy or confidentiality is a major barrier. AI systems can introduce new risks, such as data poisoning (where attackers feed bad data into training) or adversarial attacks (where inputs are designed to trick the model), which can lead to wrong outputs and business disruption.

If sensitive data is handled poorly, companies can face privacy issues and break rules like GDPR or CCPA. An AI model that focuses only on performance could make choices that clash with regulations or company values. Strong cybersecurity, good data governance, and responsible AI practices are key for protecting information, keeping trust, and avoiding legal and reputation damage.

Ethics, Bias, and Governance Gaps

Ethics goes beyond privacy. Bias and unethical AI use are major concerns, with 80% of AI users and providers calling bias a serious issue. If training data includes bias, the model will often repeat it in real life. This can create unfair outcomes in hiring, lending, and other areas. Predictive policing tools, for example, have been criticized for focusing too much on certain communities, repeating existing inequalities.

Without clear governance, AI work can run into compliance problems and unclear ownership. Questions about who controls the model, who owns the data, and who is responsible for decisions can slow projects down. Clear ethical rules and governance structures help meet regulations, build trust, and keep AI use responsible while still supporting innovation.

Resistance to Change and Cultural Obstacles

People issues can be one of the biggest blockers. AI can change how teams work, what tools they use, and even who makes decisions. This can lead to resistance, especially in traditional organizations. The strong hype around AI has also created many opinions and misunderstandings across employees, managers, and executives.

About 85% of employees believe AI will affect their jobs in the next two to three years, and people often disagree on whether that change will be good or bad. Fear and uncertainty can reduce creativity and slow adoption. Leaders are 2.4 times more likely to say employees are not ready, even though employees often use generative AI tools three times more than leaders expect. To succeed, companies need to reduce fear, build readiness, and create a culture that can adapt.

Explainability and Transparency Challenges

Many advanced AI models-especially deep learning-act like “black boxes,” meaning it is hard to explain how they reach a decision. As models become more complex, it becomes harder to explain, manage, and regulate their outcomes. This lack of clarity can block trust, especially in healthcare, finance, and law, where decision reasons matter.

For example, a credit-scoring model might reject a loan without giving a clear reason. That can upset customers, raise regulatory concerns, and make it harder to spot bias in the model. If teams cannot explain decisions, they also struggle to debug, audit, and get buy-in from users and stakeholders, which limits real-world use.

How Do Data Quality and Availability Affect AI Outcomes?

Data sits at the center of AI success. It powers algorithms, shapes models, and drives insights. So if data quality or access is weak, AI results will also be weak, and projects can stall instead of delivering improvements.

Impacts of Inaccurate, Incomplete, or Biased Data

“Garbage in, garbage out” fits AI perfectly. If training data is inaccurate, incomplete, or inconsistent, predictions become unreliable and problems spread. For example, a customer service chatbot trained on incomplete or outdated logs may give wrong answers, frustrating customers and lowering service quality.

In healthcare, many datasets are split and incomplete. If patient records are inconsistent, AI predictions may be wrong, leading to poor decisions, wasted spending, and risks to patient care. Biased data can also repeat inequality. If a hiring tool learns from past hiring data that reflects unfair patterns, it may favor certain groups and create discrimination, with ethical and legal consequences. Data quality is not a small detail-it affects real people and real outcomes.

Consequences of Insufficient Proprietary or Domain Data

Public datasets can help you start, but real advantage often comes from internal, domain-specific data. Many organizations struggle here: without enough relevant internal data, it is hard to adapt AI models to the details of a specific business, customer base, or operating environment.

This often leads to generic solutions that do not deliver strong insights. Models may be less accurate and less useful, reducing business value. Also, without a steady stream of quality internal data, it is harder to keep training and improving models through approaches like reinforcement learning and MLOps, which limits long-term usefulness.

Techniques to Improve Data Quality for AI

Fixing data problems takes a proactive mix of steps. Start with data cleansing: regularly review datasets to remove duplicates, fix inconsistencies, and fill missing values. Automation tools can reduce manual work while keeping data consistent. Next, focus on data integration. Bring data from different sources into one consistent structure. Extract, Transform, Load (ETL) systems can help keep data flowing in a reliable format that AI models can use.

If proprietary data is limited, there are other options:

- Data augmentation: create more training examples by paraphrasing text, translating, or adding small changes.

- Synthetic data: generate artificial data through simulation or algorithms when real data is unavailable or sensitive.

- Data partnerships: work with non-competing companies or research groups to access more diverse datasets.

- Federated learning: train models across decentralized datasets without sharing raw data, helping with security and compliance.

Why Is the AI Skills Gap a Major Implementation Challenge?

AI is improving fast, and the need for specialized talent has grown quickly. This has created a major skills gap that blocks many organizations from building and scaling AI systems. Without the right people, even strong AI plans can stay stuck at the idea stage instead of producing real results.

Key Areas Lacking Talent and Expertise

The skills gap shows up in several places. There is a shortage of specialists-data scientists, machine learning engineers, and AI architects-who can design, build, and deploy advanced models. These roles matter for initial development and for solving hard problems, removing blockers, and setting best practices.

There is also a wider problem: low AI knowledge across the general workforce. Companies may roll out generative AI or agentic AI tools without enough guides, training, or learning resources. This creates a gap where people do not know how to use the tools well, which hurts adoption. Over time, it can create a split between AI-proficient employees and everyone else, making it harder to scale AI across departments.

Solutions for Bridging the AI Talent Shortage

Closing the talent gap usually requires both internal development and outside support. One of the best steps is to train and upskill current employees through programs, workshops, and certifications that cover basic to advanced AI topics. Hands-on experience and ongoing learning help build skills inside the company. Microsoft’s approach under CEO Satya Nadella, which combined hiring data scientists with reskilling the broader workforce to think with AI, is a useful example.

Companies can also use partnerships to bring in missing skills. Working with AI vendors, research groups, and consulting firms gives access to specialized knowledge without building everything internally. Low-code/no-code AI platforms can also make it easier for non-specialists to build and adjust AI solutions. In addition, building wider AI knowledge across the company-such as making AI risk training mandatory for executives and using reverse mentoring where AI specialists coach leaders-helps shift culture and supports broader adoption.

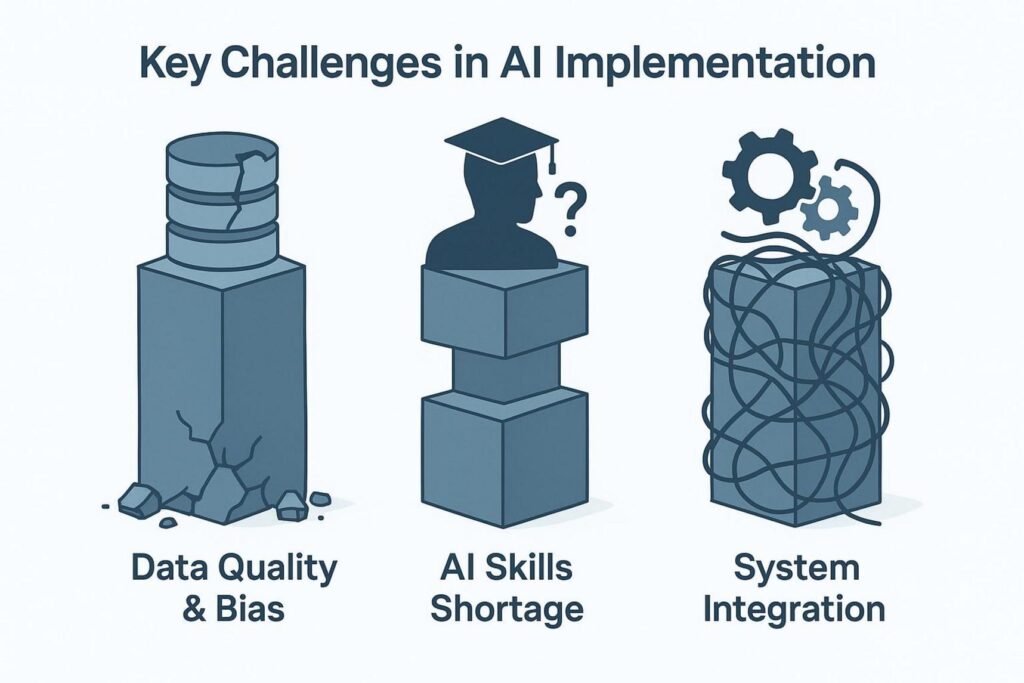

How Can Organizations Overcome Integration and Scalability Challenges?

Moving from an AI prototype to a stable, production-ready system often brings major technical and operational challenges. Many AI projects fail because they cannot fit well into current systems or cannot handle real-world scale.

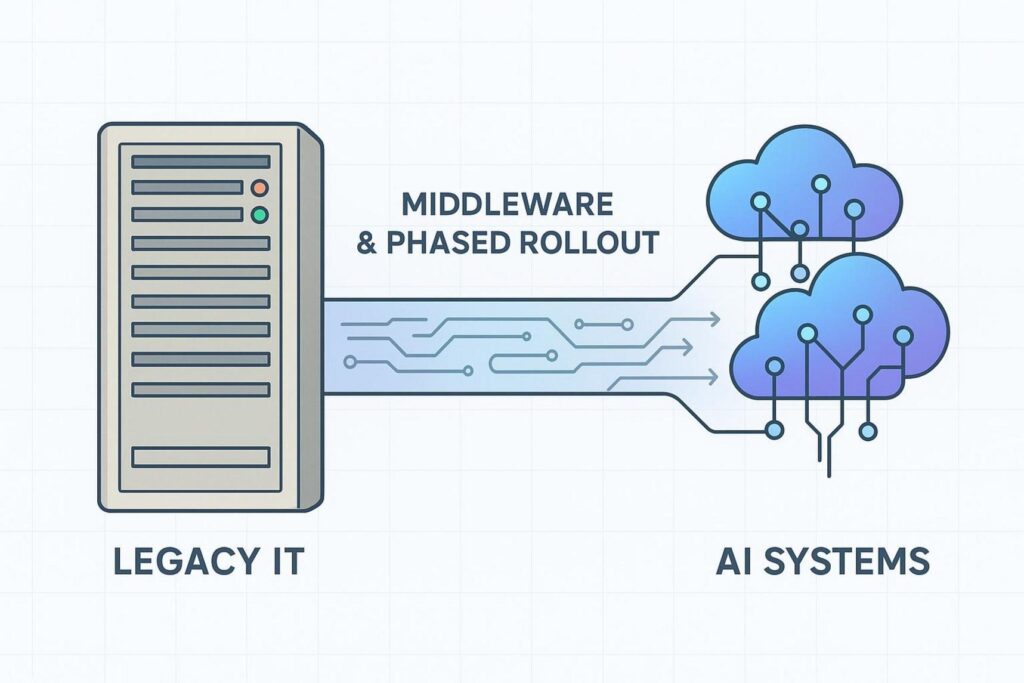

Barriers to Integrating AI with Legacy IT Infrastructure

A major barrier is that new AI tools often do not match older IT systems. Legacy systems were not built for heavy computation, modern data formats, or fast processing. Many organizations lack the modern infrastructure needed to process large volumes of data quickly.

Trying to run AI on outdated storage or systems can cause slow apps, random failures, bottlenecks, and instability. These problems grow as more users and more data are added. If the infrastructure is not updated, integration becomes a constant struggle and makes AI look less valuable than it should be.

Strategies for Scalable AI Deployment and Adoption

To handle integration and scaling, organizations need a clear plan. Create a clear integration roadmap and roll out changes through a phased implementation. A step-by-step rollout makes it easier to test and adjust without disrupting operations, and it helps teams build confidence.

To connect AI tools with older systems, invest in middleware that helps different systems communicate and share data. Also, link AI projects to business goals from the beginning, so the focus stays on real impact and scalable use cases instead of “interesting technology.” This helps avoid the PoC trap.

Scaling also depends on infrastructure design. A data-first strategy means planning for data volume, speed, privacy, and tracking from day one. AI-ready infrastructure often needs the right storage, networking, and processing, especially for GPU-heavy workloads. Cloud platforms built for AI (AWS, Google Cloud, Microsoft Azure) and distributed computing can scale resources based on demand and help avoid bottlenecks. Regular infrastructure reviews also help teams spot needs early and upgrade in time.

What Are the Security and Privacy Risks in AI Projects?

As AI becomes more central to business operations, it attracts more attacks. Because AI relies on large datasets and complex models, it introduces security and privacy risks that need careful planning and strong protections.

Vulnerabilities Introduced by AI Systems

AI can become a business risk without strong cybersecurity. One issue is data poisoning, where attackers add harmful or incorrect data into training datasets so the model learns the wrong patterns. This can lead to wrong decisions and business disruption.

Another threat is adversarial attacks, where inputs are designed to trick a trained model. For example, small changes to an image might cause a model to misclassify it, or attackers might use AI to create very convincing phishing messages. These attacks can damage system integrity, cause losses, and reduce trust. AI can also create legal and reputation risks if its goals are not aligned with ethical rules and it makes choices that break policies or regulations.

Protecting Sensitive Data during AI Implementation

AI needs a lot of data, so privacy and confidentiality are major concerns. Protecting data is about following laws like GDPR and CCPA, but it is also about customer trust and keeping business information private. Start by reducing exposure of sensitive data with techniques like anonymization (removing personal identifiers) and differential privacy (adding noise to reduce the chance of identifying individuals while keeping overall patterns useful). Encryption (at rest and in transit) is also a basic requirement.

Organizations should also use strict access controls and audit logs so they can track who accessed data and how it was used. Federated learning can help again by keeping data in place while still training models. Regular privacy impact reviews and clear documentation of how AI uses data also help with compliance and trust.

Best Practices for Enhancing AI Security

AI security works best when it is built into the full development lifecycle. Use secure coding from the start, including input validation and following security standards. Use AI threat detection tools and run regular cybersecurity risk reviews before launching new AI systems.

It also helps to create AI-specific incident response plans. For example, Microsoft updated its protocols in 2024 after the Midnight Blizzard attack, focusing on model security, stronger protection of sensitive logs, and AI-based monitoring to spot AI-driven phishing and automated credential stuffing. Using zero-trust architecture also strengthens defense by treating every request as untrusted unless verified with strong authentication and encryption. Companies like JPMorgan show how this approach supports safer AI adoption while using AI’s always-on monitoring to detect threats faster.

How Do Cost and ROI Concerns Impact AI Adoption?

AI promises better efficiency, new ideas, and stronger competition. But real implementation can conflict with budgets and the need to prove clear ROI. For many organizations, the financial case for AI remains a big blocker that affects how much they invest and which projects they start.

Challenges in Building a Strong Business Case for AI

A big issue is building a convincing financial case. Since 42% of respondents report this problem, it’s clear that many leaders still see AI costs as high-especially during uncertain economic periods. While long-term results may look strong, short-term benefits can be unclear, making it harder to secure budget approval.

AI projects can also grow expensive because of unexpected data issues, high salaries for specialists, power use for large computing, and long development timelines. Without a clear link to business outcomes, AI can look like a costly experiment, and projects may stall at the PoC stage.

Methods to Measure and Demonstrate AI ROI

To reduce skepticism, organizations need to track and show ROI clearly. Start by choosing specific use cases with measurable value, such as process automation, marketing content generation, faster product development, or better customer engagement. Then measure outcomes like lower labor costs, faster releases, or better customer satisfaction and retention.

Also look for new revenue options, like AI-powered products, personalization, or real-time decision support. Begin with small, low-risk pilots to generate proof and build momentum for larger funding. A full financial case should also include a risk review that compares investment costs with the cost of doing nothing, such as losing market share to AI-driven competitors or keeping slow manual processes.

Approaches for Cost-Effective AI Implementation

To keep costs under control, organizations can take several steps:

- Use open-source tools where possible to reduce licensing costs and keep flexibility.

- Use flexible budgeting and agile planning so spending can shift as needs change.

- Track ROI throughout the project using metrics like productivity gains, revenue impact, or customer support improvements.

By using these approaches, companies can build a clearer business case and turn AI into a steady driver of growth instead of a budget risk.

What Role Do Ethics, Bias, and Governance Play in AI Success?

AI can deliver major benefits, but long-term success depends on more than technical skill. It also depends on ethical rules and strong governance. Ignoring these areas can create risk, reduce trust, and weaken the value AI is supposed to provide.

Risks of Algorithmic Bias and Unethical AI Use

Algorithmic bias is one of the biggest ethical concerns. AI learns from training data, so if that data includes past unfairness, the model can repeat it. This can create discriminatory outcomes. For example, AI in hiring or lending can wrongly exclude qualified people because of biased patterns in training data. Predictive policing has also faced criticism for focusing too heavily on specific communities, which can repeat existing social unfairness.

Unethical AI use can also include privacy violations, misuse of sensitive data, and unclear responsibility for decisions made by AI without human oversight. Without guardrails and transparency, AI can create legal problems, harm reputation, and reduce public trust.

Establishing Ethical Guidelines and Governance

To reduce these risks, organizations need clear ethical rules and strong governance. AI ethics focuses on maximizing positive impact while reducing harm. It covers data responsibility, privacy, fairness, explainability, resilience, and transparency. Organizations should regularly test models for bias using fairness checks and reduce unintended skew. Training on diverse datasets also lowers the risk of ignoring smaller groups.

AI governance supports compliance, trust, and consistent delivery. It includes clear roles, policies, risk controls, and ethical standards. Good governance often includes ethical AI committees and alignment with regulations like GDPR and CCPA. Legal, privacy, and compliance teams should be involved from the start so systems are traceable and auditable. Leadership development that includes AI ethics, plus a cross-functional AI governance task force, helps organizations test systems closely and keep decisions fair, supporting responsible innovation.

How Can Organizations Address Change Management and Culture Barriers?

AI implementation is not only a technical project-it also brings a major shift in how people work. Resistance to change and misunderstandings about AI can slow adoption and prevent organizations from getting full value.

Sources of Resistance to AI Adoption

The strong hype around AI has created many opinions and misconceptions across employees, managers, and executives. A major cause of resistance is fear about job impact. Since 85% of employees think AI will affect their roles soon, many worry about replacement instead of support. A lack of understanding about how AI can assist people, rather than remove them, can lead to skepticism and low engagement.

AI also changes workflows, tools, and sometimes decision-making authority. In hierarchical organizations, this can create resistance, especially where uncertainty is not welcome. Projects can also get stuck in silos if teams do not share goals and buy-in. Leaders often say employees are not ready, while employees may already be experimenting more than leaders realize. That gap shows why employees need to take part in shaping the change, not just receiving it.

Steps to Build AI Literacy and Foster Innovation

To reduce resistance, organizations should build strong change management into AI plans. A key step is building AI literacy across the workforce. This can be done with tiered training that covers both benefits and risks, plus ongoing learning that fits into daily work. Customized training, toolkits, manuals, and hands-on practice help people feel confident using AI tools.

To support a culture that can adapt, organizations should support collaboration and experimentation. Cross-functional teams that mix domain experts, developers, data scientists, and business leaders help create tools that people will actually use. Small, low-risk pilots help teams learn and adjust, and they make space for mistakes that lead to learning. Leadership support matters too: leaders should fund the work, recognize progress, and set clear rules for responsible AI. Reverse mentoring (AI specialists coaching executives) can also close knowledge gaps. Gathering employee feedback and using a bottom-up approach during pilots builds shared ownership and increases adoption.

How Can Explainability and Transparency Improve AI Trust?

As AI becomes more capable and more independent, a major issue grows: many models are hard to explain. If people cannot understand why a model made a decision, trust drops, adoption slows, and risks rise-especially in areas where accountability matters.

The Need for Interpretable AI Models

Many advanced AI systems, especially deep learning models, are hard to interpret. They can be accurate, but their reasoning is unclear. This makes it difficult to explain results, manage systems, and regulate outcomes. In healthcare, finance, and law, explainability is often required for ethical and regulatory reasons.

If a credit model rejects a loan and cannot explain why, customers may complain and regulators may take interest. It is also harder to detect bias. In healthcare, doctors need to know why an AI suggests a diagnosis before trusting it. Without interpretability, teams struggle to debug models, audit behavior, meet compliance needs, and gain user confidence. Lack of explanation reduces accountability and slows improvement.

Tools and Frameworks for Increasing Model Transparency

Trust improves when models and processes are clearer. One option is model simplification: use simpler models (like decision trees or linear models) when they are good enough. If complex models are needed, use them alongside simpler models to validate results and help explain outcomes.

Another option is Explainable AI (XAI). Tools like LIME and SHAP help show which inputs influenced a prediction most. These methods help explain specific decisions and highlight key drivers. Strong documentation also matters: keep records of data sources, training steps, settings, and model changes to support audits and reviews.

Finally, open communication with stakeholders is important. Explain how the model works, what it can do, and where it may fail. When users, customers, and regulators understand the limits and strengths of AI systems, trust grows and adoption becomes easier.

What Are Effective Strategies for Overcoming AI Implementation Challenges?

Solving AI implementation problems takes more than fixing single issues. It requires a full strategy that combines technology choices with changes in how the organization works. Success comes from building a system that supports new ideas, controls risk, and ties AI work to business goals.

Building Cross-Functional Teams and Bridging Silos

One strong approach is reducing silos and improving collaboration. AI projects often stall when knowledge sits inside a small data science group that is disconnected from daily operations. Organizations should build cross-functional teams that include AI specialists plus people from IT, finance, security, legal, HR, and business strategy.

This helps AI solutions match real business needs and constraints, while also covering ethics and security. Ongoing feedback between technical teams and business leaders keeps alignment. If end-users are involved early, tools are more likely to fit real workflows and deliver value, instead of stalling at PoC.

Aligning AI Projects with Business Objectives

Many AI efforts fail because they are not tied clearly to business goals. To succeed, every AI project should target a real business problem and aim for measurable impact. This means avoiding a “tech for its own sake” mindset and evaluating use cases by business value, feasibility, and ability to scale.

A clear AI strategy should connect directly to company goals, such as improving customer experience, lowering costs, creating new revenue, or improving analytics. Frameworks like Balanced Scorecards or OKRs can translate broad goals into specific AI initiatives with measurable targets. This keeps teams focused and makes spending easier to justify.

Continuous Learning, Governance, and Iteration

AI work does not end after launch. It needs ongoing learning, adjustment, and improvement. Organizations should support training so teams stay current, and give employees room to test ideas and take reasonable risks. Leaders should support this with resources and recognition, and treat failures as learning when done responsibly.

At the same time, AI governance is required. A clear governance framework should define roles, responsibilities, risk management, and ethical standards. Legal, privacy, and compliance teams should be part of the work from the start so systems can be traced and audited. Regular risk reviews, bias checks, and ethical committees help keep deployment responsible. An iterative approach-where models are retrained and adjusted based on feedback-helps teams get better results and long-term value over time.

Read More: Domain Name Strategy in the Age of Brand Trust – Keywords Alone Are No Longer Enough

Turning AI Challenges into Long-Term Opportunities

AI implementation can be difficult, but it also gives organizations a major chance to improve. Companies that treat these problems as solvable can use them to strengthen innovation, resilience, and long-term growth. The ability to keep up with disruptive changes, update operating models, and raise AI maturity-while staying safe and ethical-will separate tomorrow’s leaders from everyone else.

Making AI work well over time means building a culture that supports adaptation, ongoing learning, and cross-team collaboration. It also means treating data quality as the base of reliable AI, reducing the skills gap through training and partnerships, and connecting AI to current systems while planning for scale. Strong governance, ethical rules, and serious security from day one are not just compliance steps-they help build trust and support responsible innovation. With better transparency and explainability, companies can reduce fear and help employees and stakeholders use AI with confidence.

AI does not have to be perfect to be useful; it has to have a clear purpose and be able to adjust over time. The long-term opportunity comes from turning AI from a small experiment into a scalable capability that produces value and supports business goals. By dealing with each challenge carefully, businesses can reach AI’s full potential and become more flexible and better prepared for an AI-driven future.